Agentic AI System Architecture is the blueprint for systems where software agents act, reason, and coordinate to complete tasks across services and users. For architects choosing a stack it helps to think in layers: orchestration, models and model hubs, vector stores for memory, reinforcement learning and decision modules, connectivity for real time interaction, and the deployment fabric that ties everything together. Below I map practical options in each layer and show how tools like CrewAI, LiveKit, LangGraph, and LangChain fit into everyday projects.

What the architecture needs to solve

At its core an agentic system must manage state, route decisions, run models safely, and interact with external systems.

That means planners and executors, a way to store contextual memory, connectors for third party APIs, a runtime for long lived agents, and observability so you can understand behavior.

The choices you make at each layer determine latency, cost, maintainability, and the kinds of tasks your agents can handle.

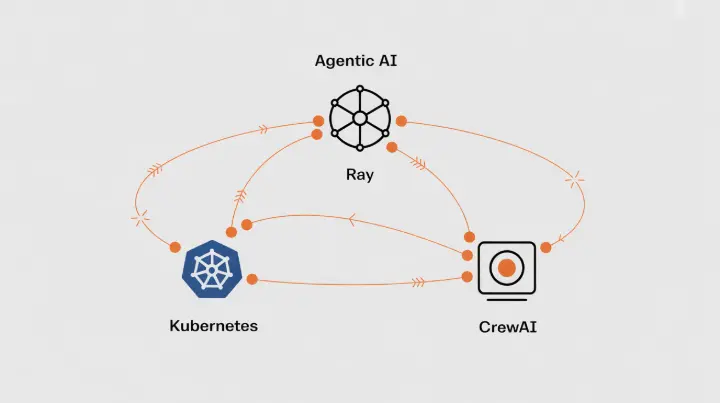

Orchestration and runtime

Orchestration coordinates workflows, scheduling, and scale. If you need distributed compute, consider Kubernetes for container orchestration. For actor and task oriented runtimes look at Ray for parallelism and low latency work distribution.

Dagster, Prefect, and Airflow are useful for data pipelines and scheduled jobs. For real time agent coordination and dynamic task assignment CrewAI provides higher level primitives that focus on multi agent workflows and role based routing.

Pair an orchestration layer with lightweight job queues such as Redis or RabbitMQ for bursty event handling.

Model hosting and model hubs

Model hubs let you pick models and update them independently of your runtime. Hugging Face and Ollama are common places to host and manage models locally or in the cloud.

For latency-sensitive tasks you may prefer to run models close to the runtime using containers or specialized inference servers.

Model versioning matters. Use a model registry and CI/CD for model promotion from staging to production. When models are large, consider quantization and runtime acceleration with ONNX or TensorRT.

Memory and vector databases

Agents rely on context and memory. Vector databases provide the index for semantic search and retrieval. Milvus, Weaviate, Pinecone, and Chroma are common choices.

Milvus and Weaviate provide flexible deployment options and strong querying features. Pinecone offers managed scaling and simplicity.

Chroma works well for embedded deployments and local testing. Each has trade offs in latency, cost, and operational complexity.

Design your schema so short term context and long term memory live in separate collections. Keep metadata and provenance with embeddings to make audits and rollbacks simpler.

Agent frameworks and orchestration libraries

LangChain is a widely used toolkit for building agent pipelines and prompt chains.

LangGraph complements that by enabling graph style orchestration of prompts and control flow, useful when the decision process involves branching and complex data flows.

CrewAI brings an agent centered control plane that helps assign tasks to specialized agents, manage permissions, and track outcomes. Use these frameworks as building blocks rather than end to end platforms.

They help accelerate development and provide patterns for conversations, tools integration, and sequential decision making.

Real time interaction and multimodal I/O

For live audio, video, and interactive sessions LiveKit is a strong option. It provides real time media streams and integrates with web and mobile clients.

If your agents need to join calls, share screens, or present synthesized speech, LiveKit bridges the media layer and your runtime.

Combine it with specialized transcription and speech synthesis services if you need higher accuracy or language coverage.

For visual input consider a small image processing pipeline that normalizes frames and extracts features before passing them to your decision modules.

Reinforcement learning and decision making

When tasks benefit from learning from interaction use RL frameworks. Ray RLlib is a scalable option that integrates with Ray’s distributed compute. Stable Baselines3 is practical for prototyping and research.

If you plan online learning keep safety and constraints at the forefront. Use a simulated environment for experimentation and clear rollout gates before any online updates.

Data connectors and tool use

Agents are useful only if they can act on your systems. Build adapters for common services such as databases, CRMs, ticketing systems, and cloud provider APIs. LangChain and LangGraph both provide connector patterns.

Favor idempotent operations for external actions and implement transactional semantics when possible. For sensitive actions require human confirmation or a verification step.

Containerization and deployment

Containerize components with Docker for consistent builds. Use Kubernetes for production scale deployments and autoscaling. If you prefer managed cloud operations, consider AWS EKS, Google GKE, or Azure AKS.

For smaller teams, managed services for vector DBs and model hosting reduce maintenance burden. Keep a separate staging cluster that mirrors production for safety testing.

Observability, testing, and governance

Instrumentation is essential. Log agent decisions, prompt inputs, retrieved memory snippets, and action outcomes. Use tracing and structured logs to reconstruct agent behavior.

Implement unit tests for prompt chains, integration tests for connectors, and adversarial tests that probe edge cases.

Maintain an audit trail for all actions that involve external systems. Governance should include guardrails for model updates, role based permissions, and data retention policies.

Example stacks

Open source focused stack

- Orchestration: Kubernetes with Ray for distributed compute

- Agent frameworks: LangChain and LangGraph for prompt flows and data orchestration

- Vector DB: Milvus or Chroma in a self hosted configuration

- Real time: LiveKit for media sessions

- RL: Ray RLlib

- Model host: Self hosted Hugging Face models packaged in containers

Managed cloud focused stack

- Orchestration: Managed Kubernetes or serverless functions for event driven tasks

- Agent frameworks: CrewAI for multi agent coordination with LangChain for prompt flows

- Vector DB: Pinecone or Weaviate managed service

- Real time: LiveKit hosted or managed alternative

- RL: Managed experimentation with Ray on cloud nodes

- Model host: Cloud model endpoints with a model registry

Best practices for architects

Start small with a clear separation of concerns. Keep the decision logic modular so you can swap out models or vector stores. Instrument aggressively and design rollback procedures for model changes.

Treat memory as a first class artifact and ensure provenance. Emphasize safety by requiring human in the loop for high risk actions. Finally choose components that match your team skills and operational budget.

Conclusion

Agentic AI System Architecture is about composing reliable layers so agents can reason, act, and recover. Tools like CrewAI, LiveKit, LangGraph, and LangChain are part of an ecosystem that makes building agent driven systems faster.

The right combination depends on your latency needs, scale, and how much operational overhead you can absorb. Focus on modular design, clear observability, and incremental rollout so you can iterate without risking core systems.